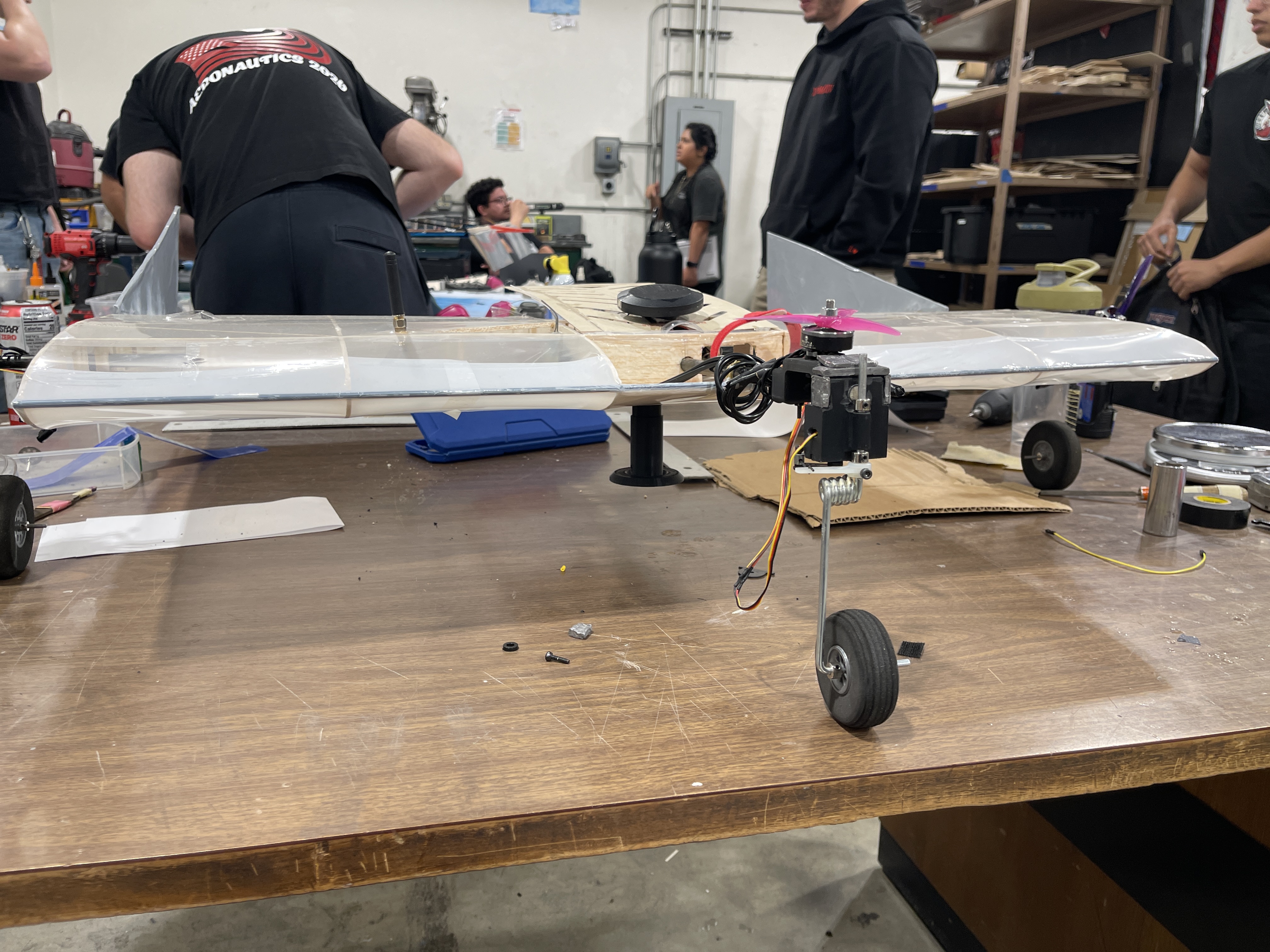

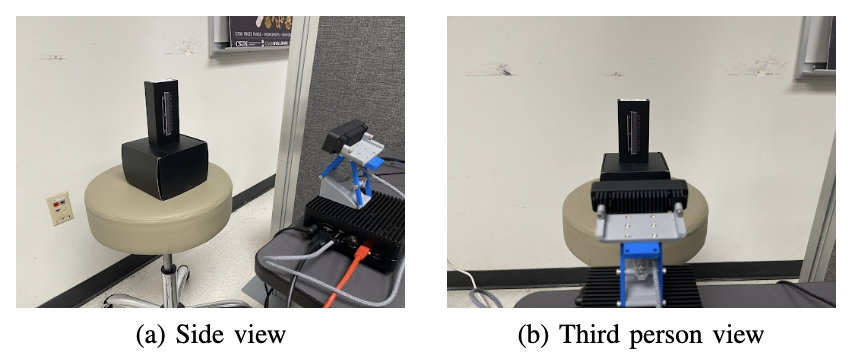

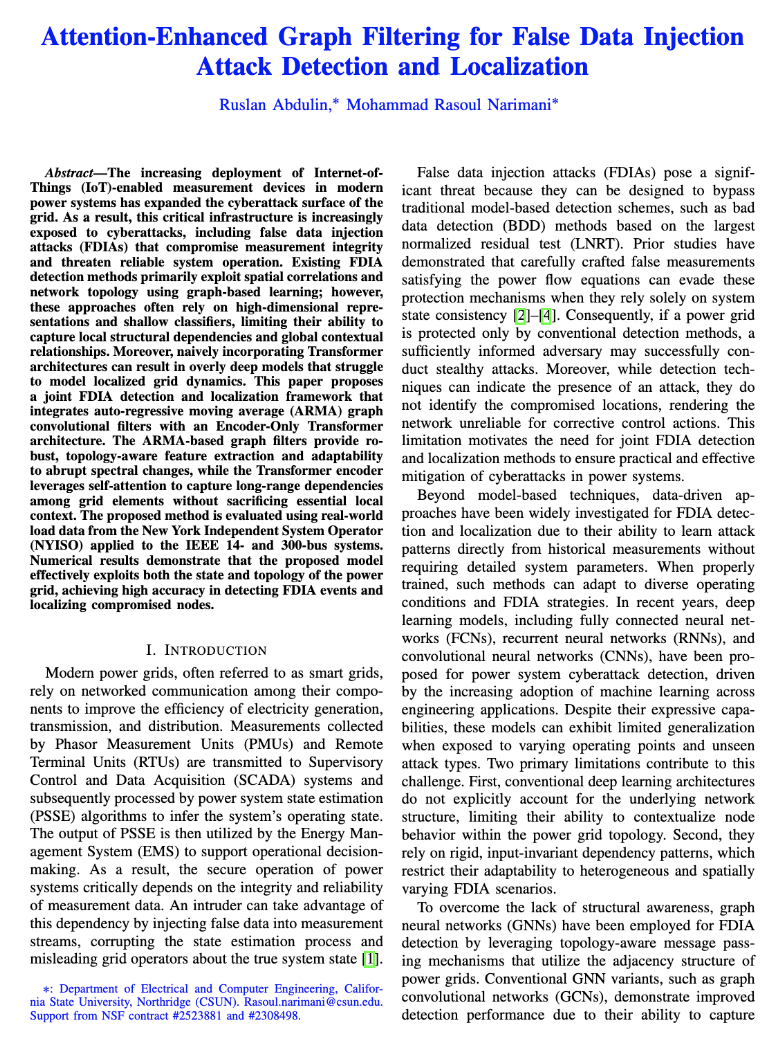

On-Board Computer for a drone, SAE international competition 2026

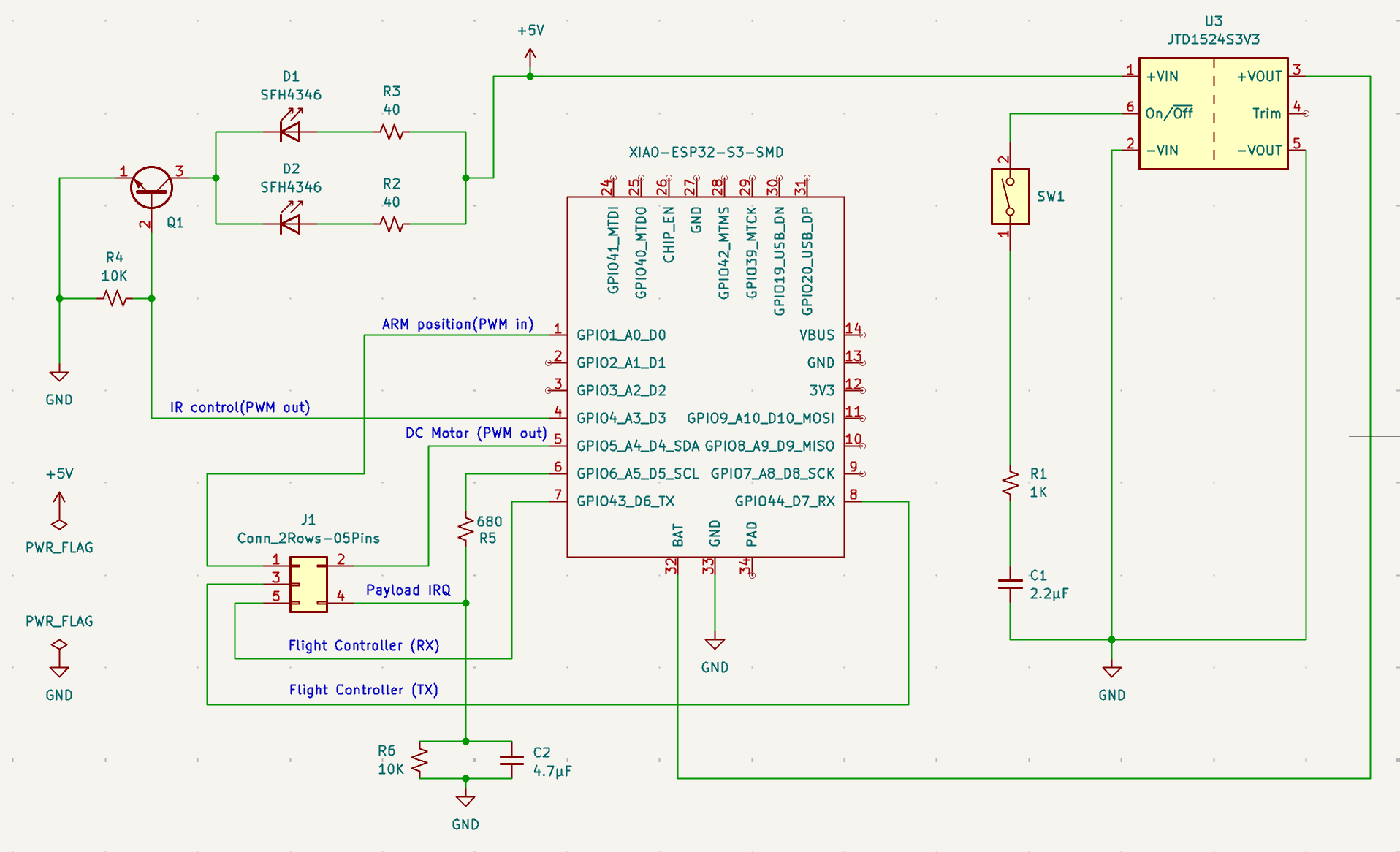

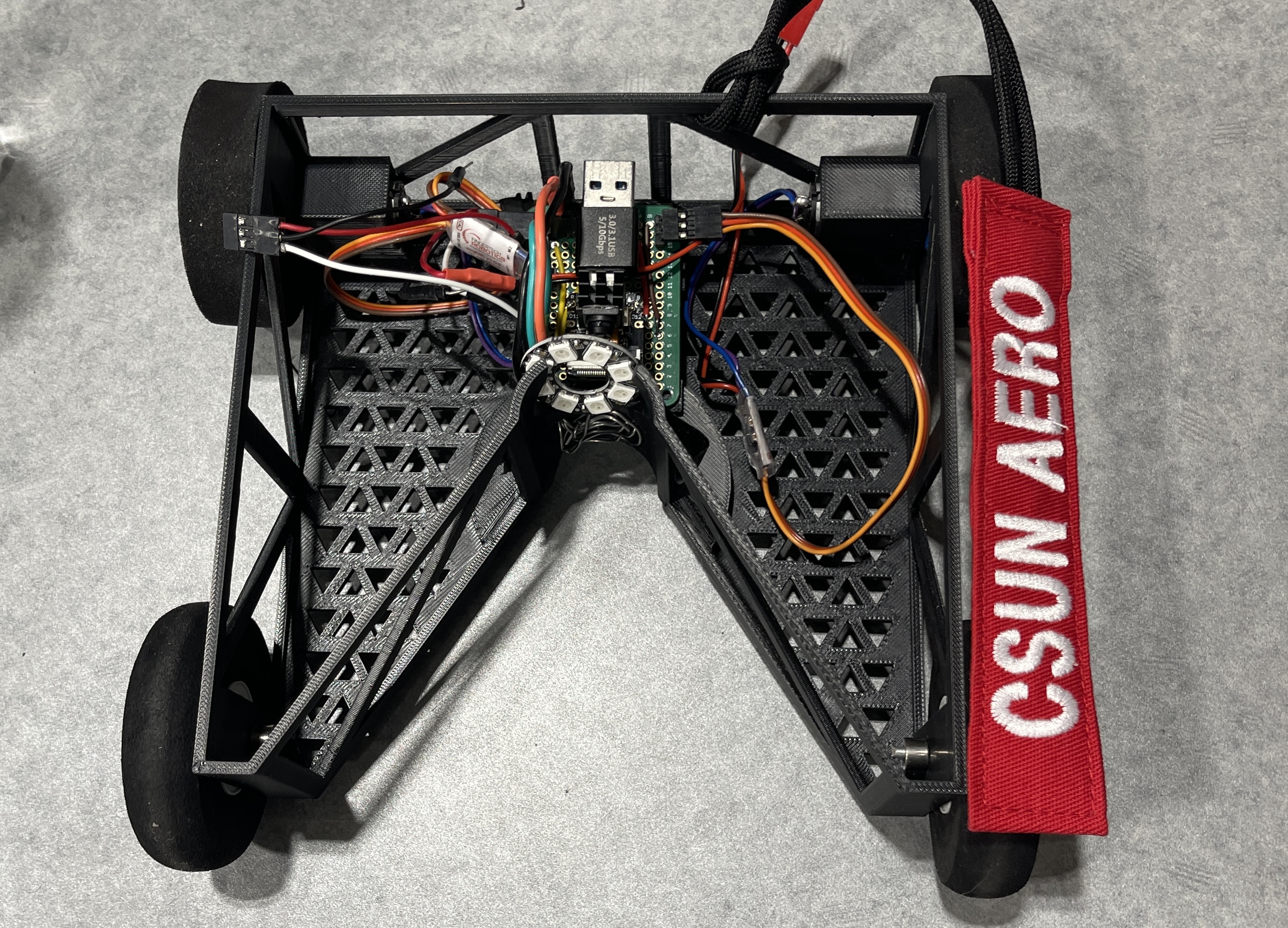

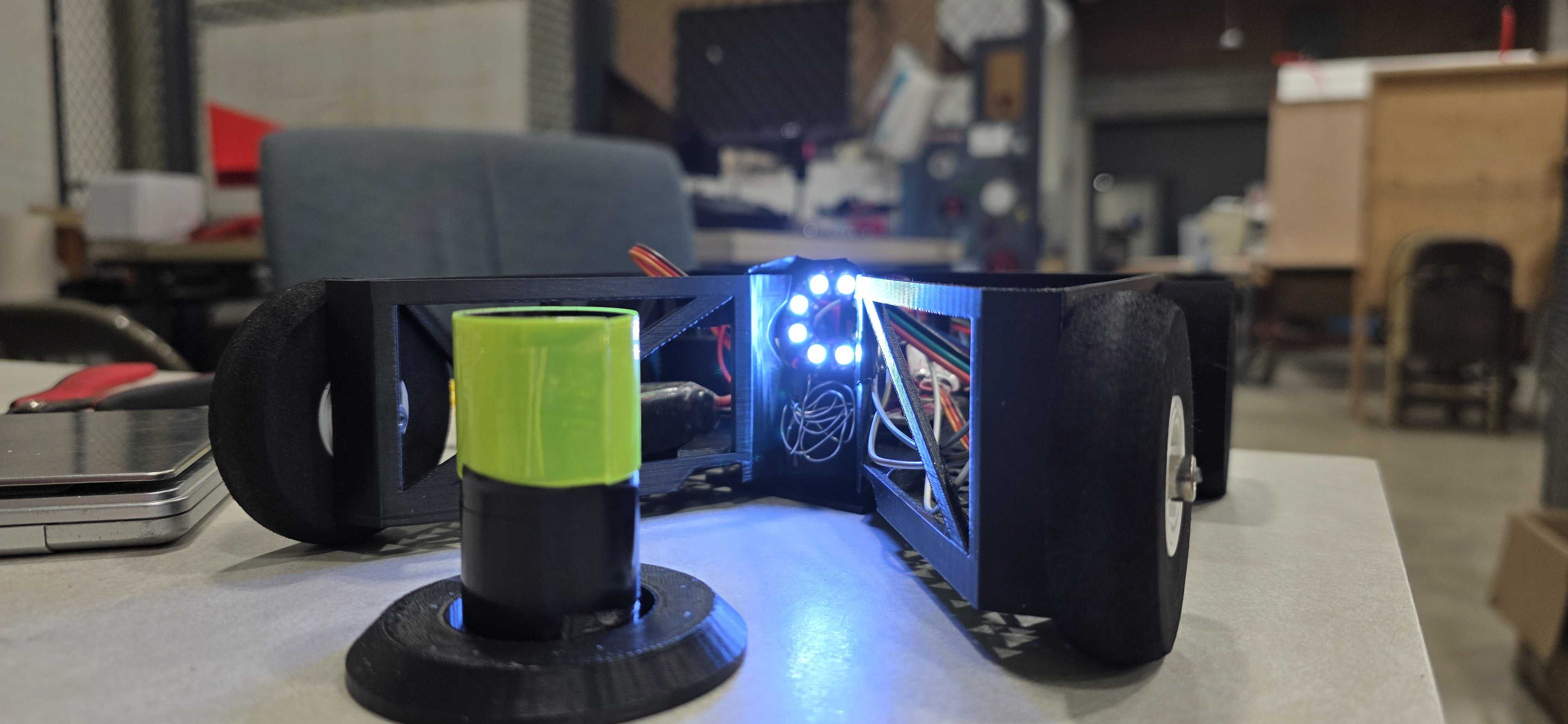

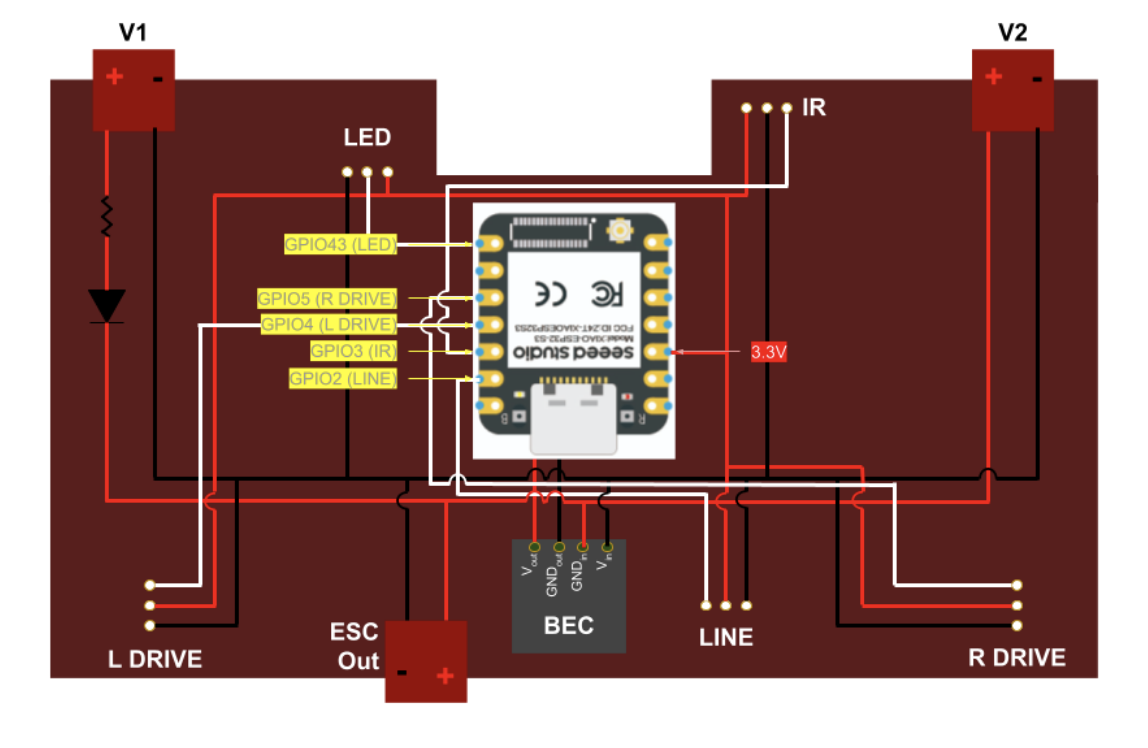

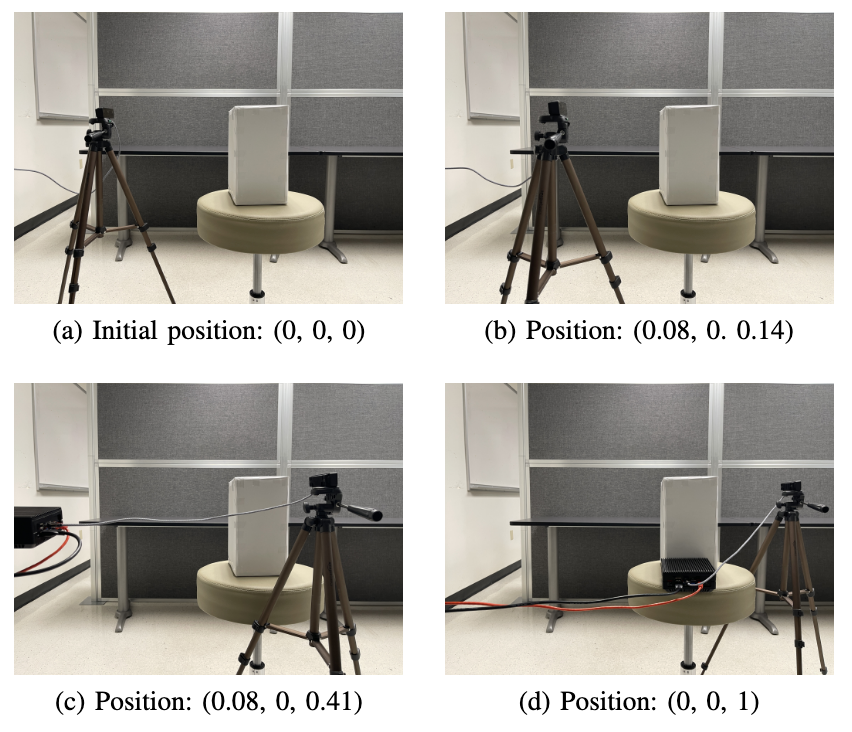

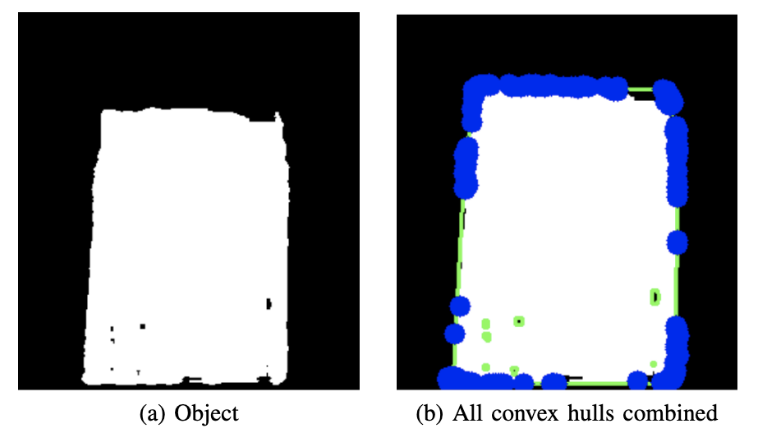

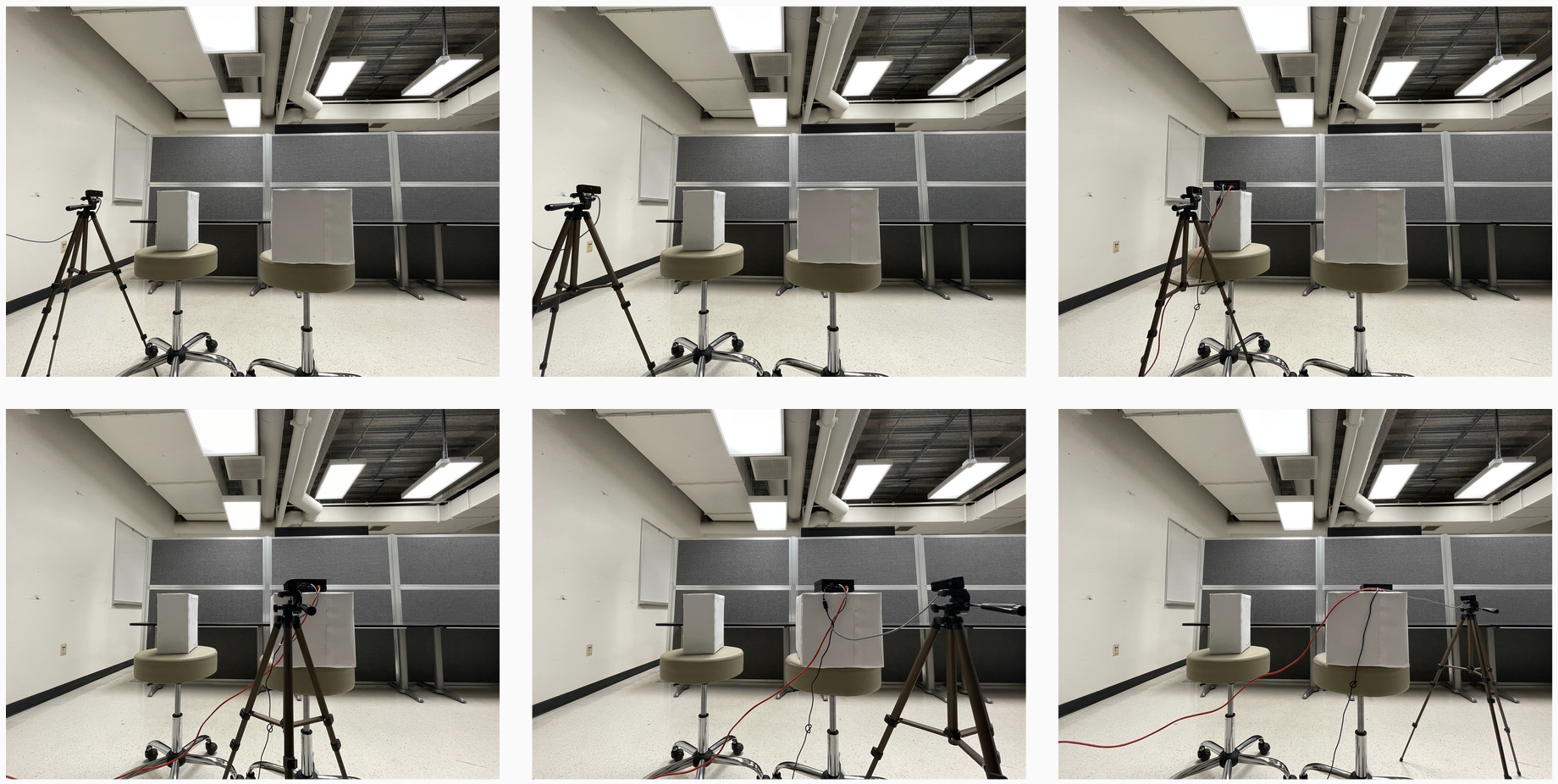

The onboard computer is responsible for UART communication with any flight controller that supports the MAVLink protocol in order to promptly pick up a dynamic payload upon landing. The computer utilizes the combination of a state-machine approach and an RTOS to ensure the pick-up procedure takes place with correct timing, allowing potential repetition of the mission without rebooting. The procedure includes sending a variety of infrared commands, lowering the gate until it touches the ground, recognizing that the payload is inside, raising the gate with the payload inside, and signaling to the drone that it is time to take off.

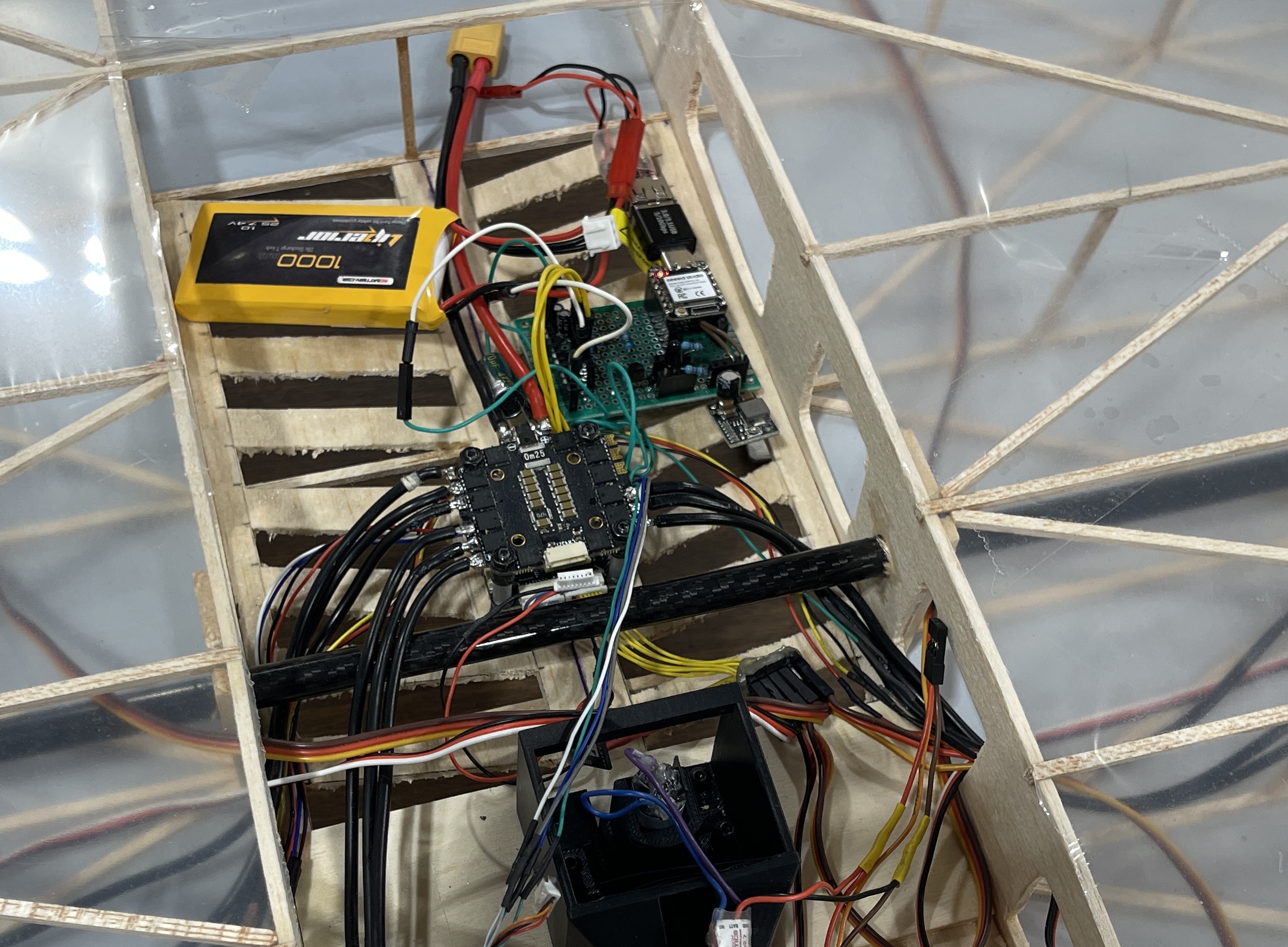

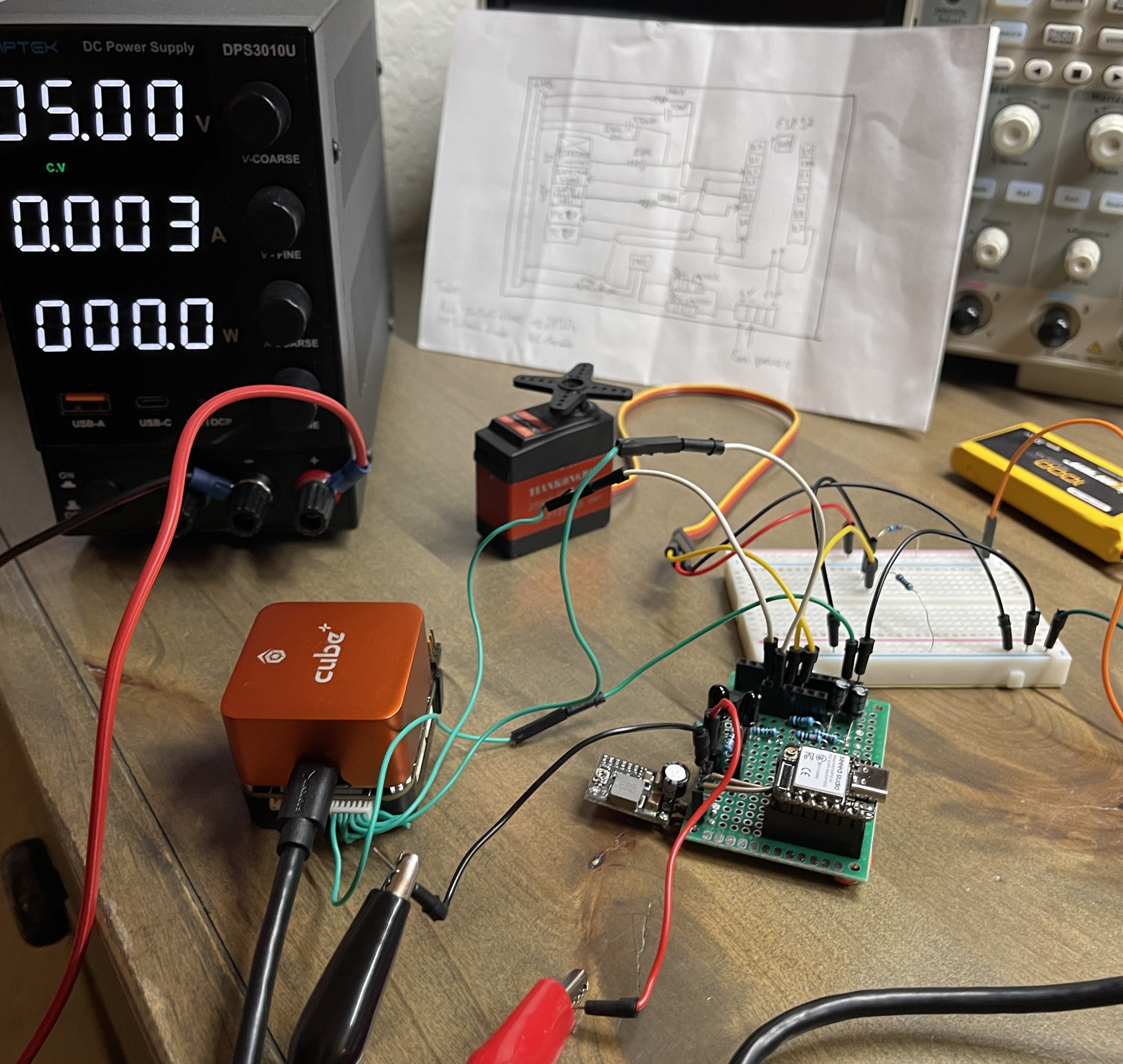

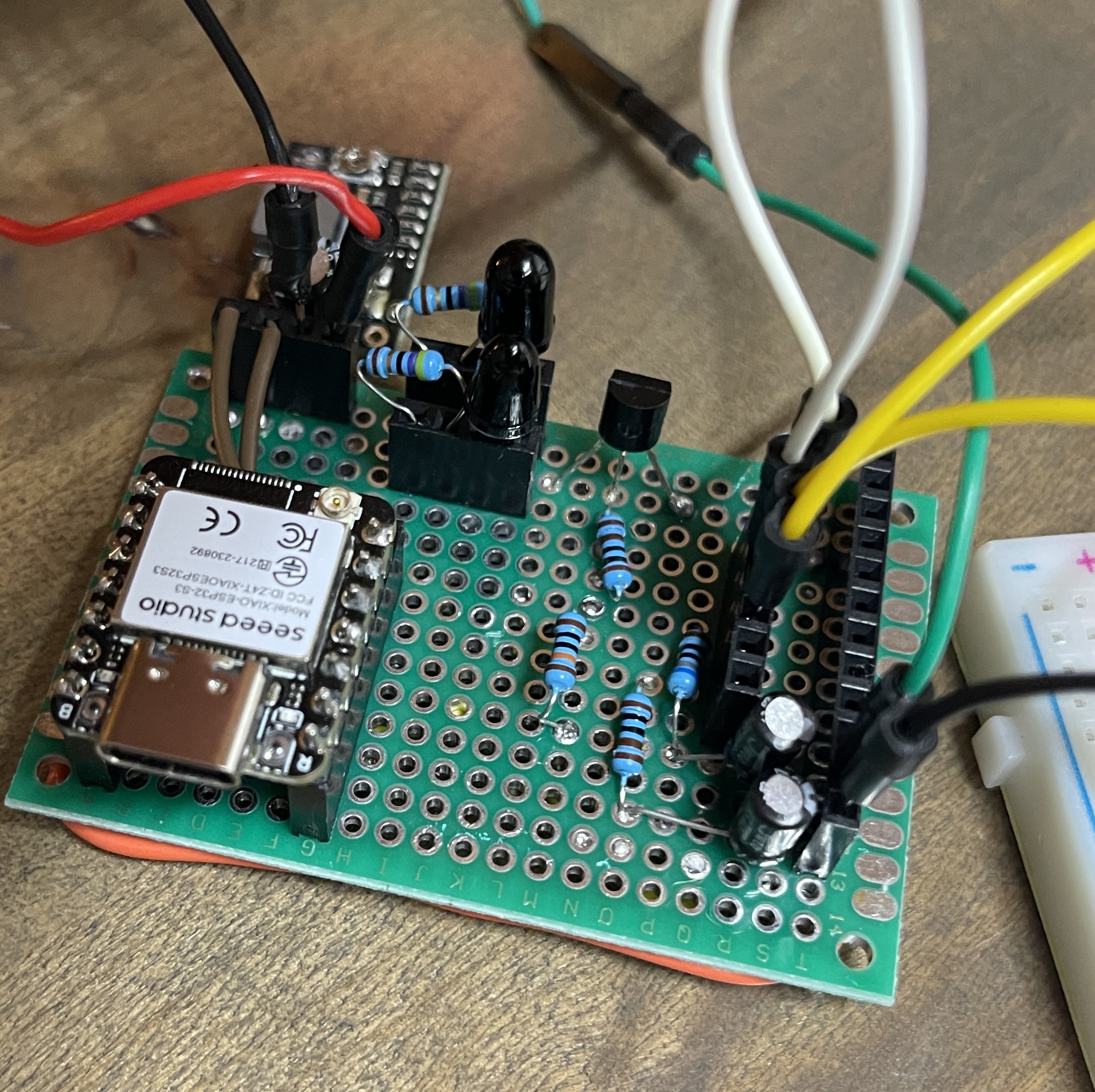

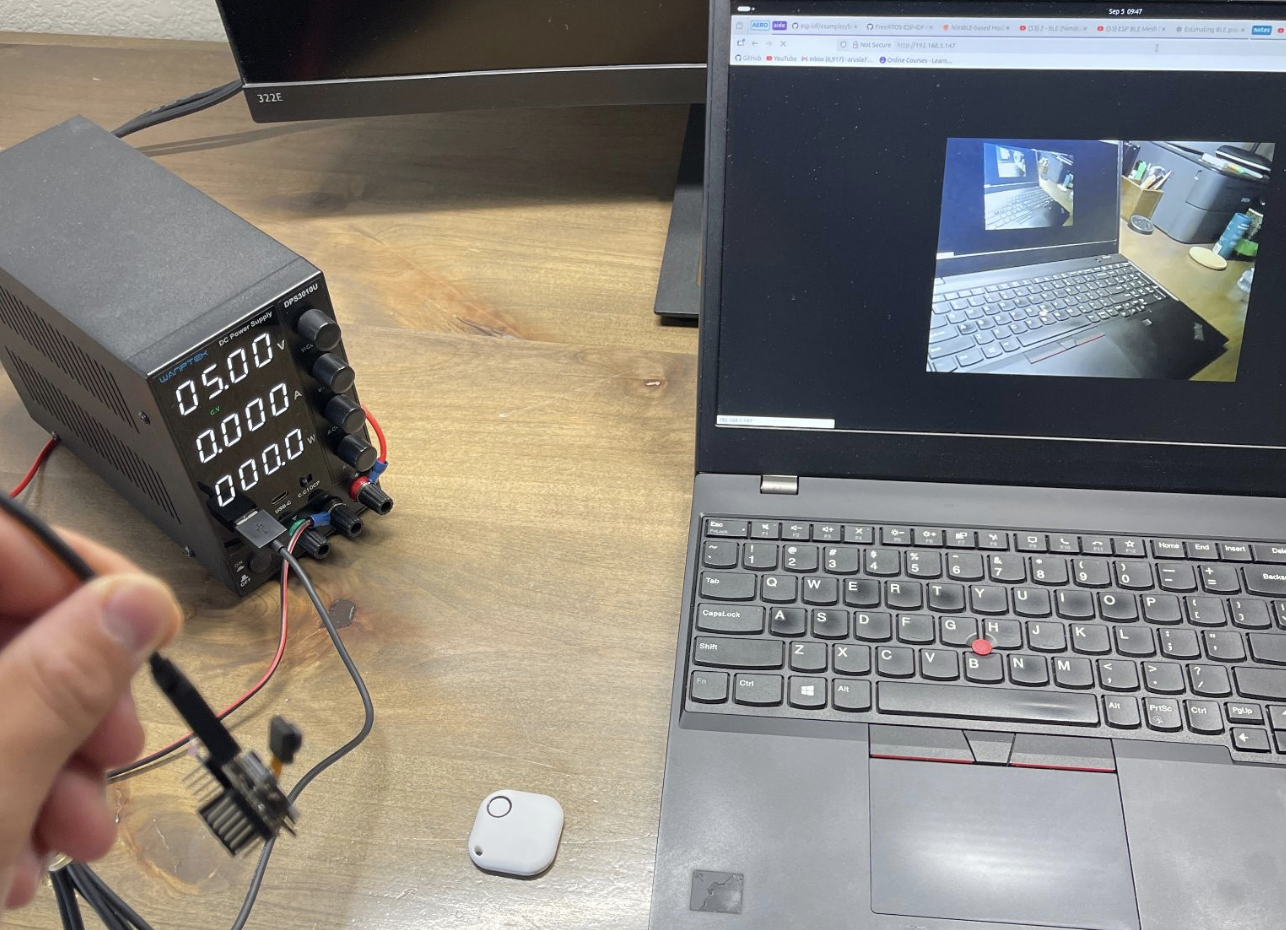

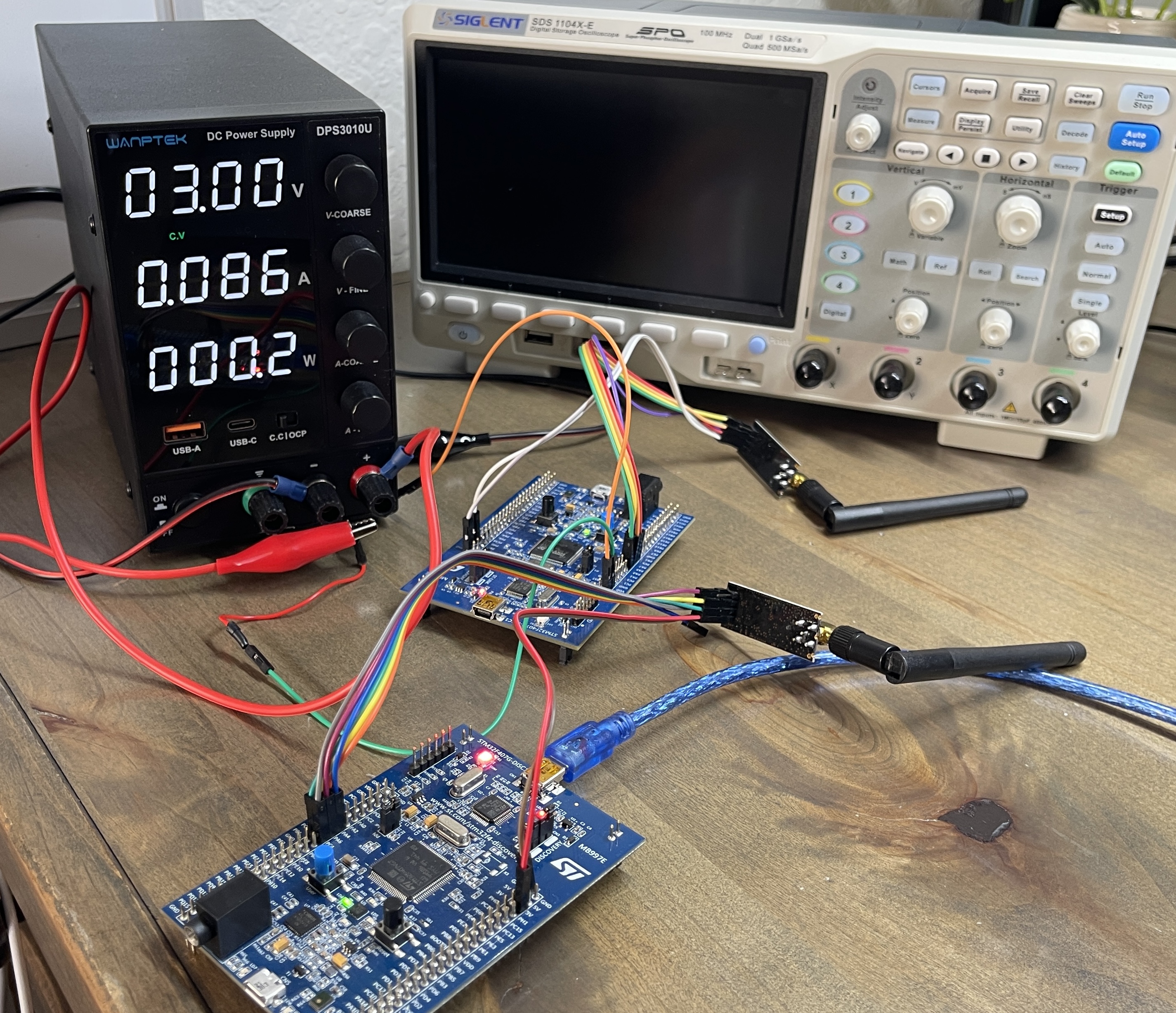

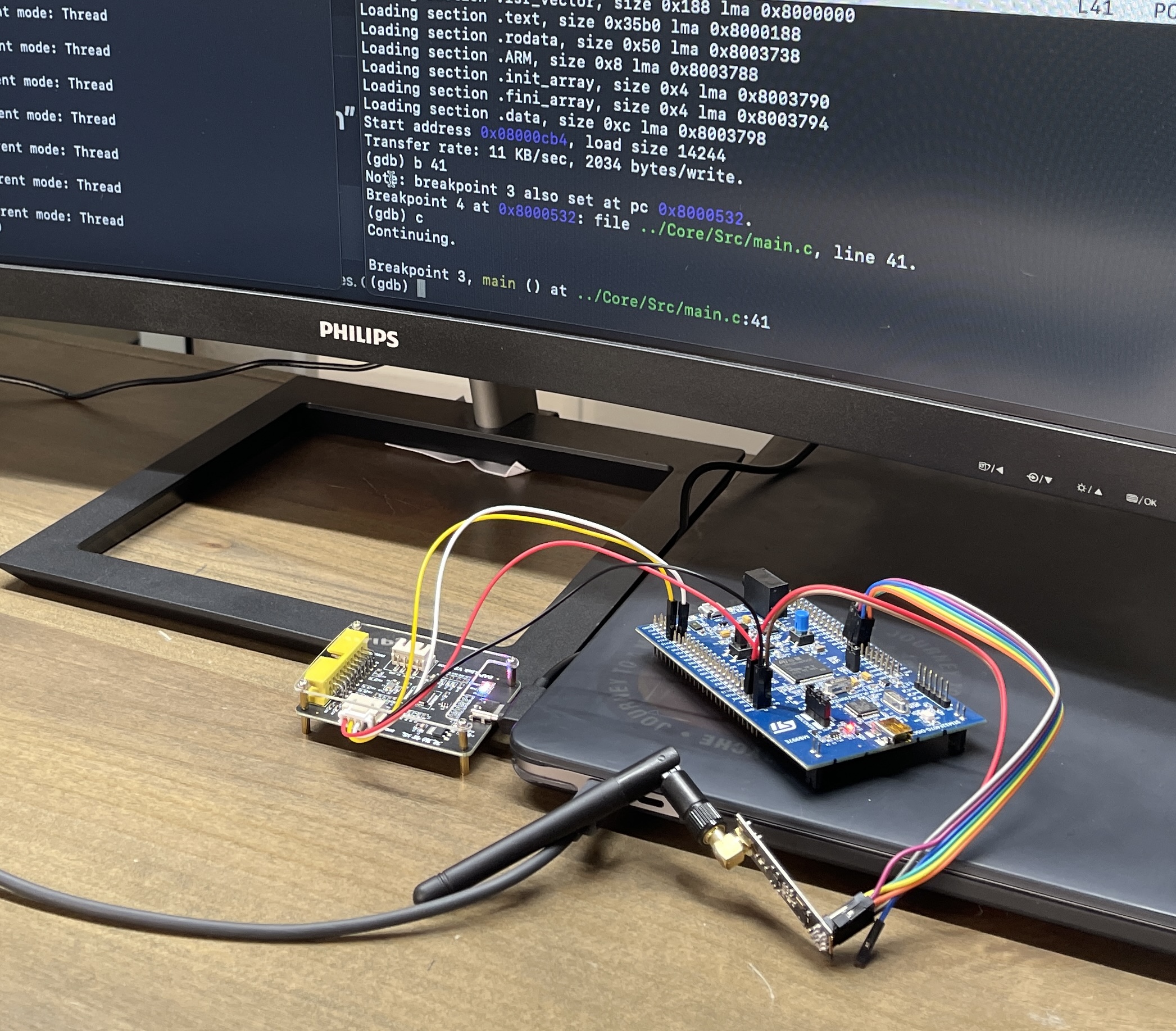

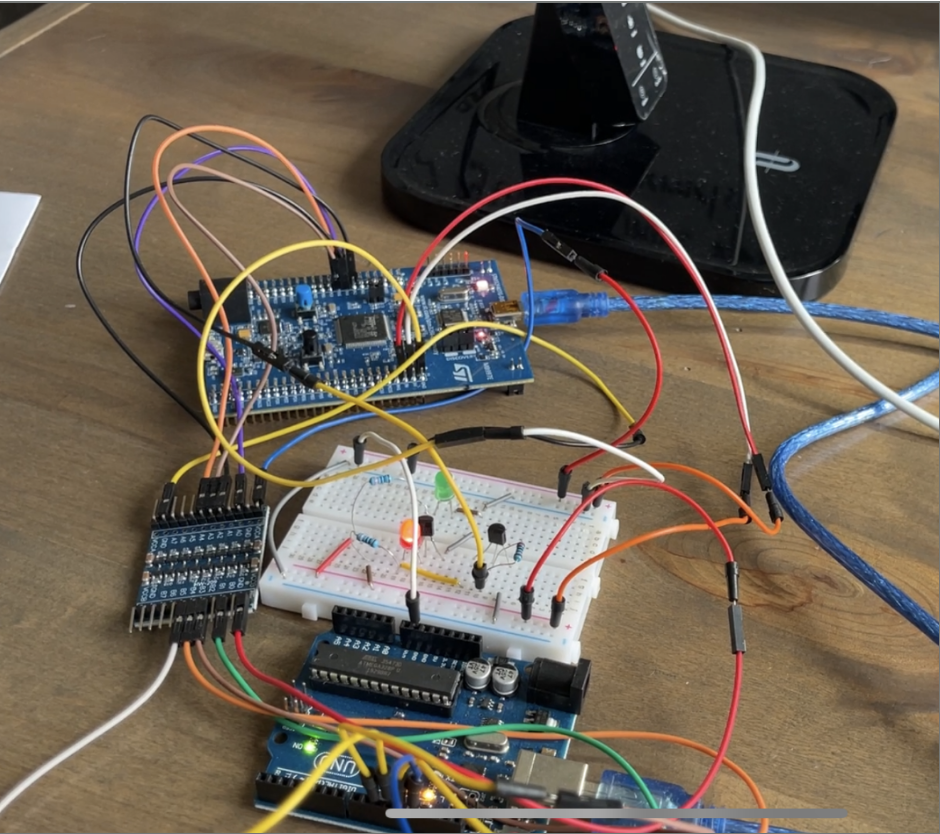

The computer is placed on a custom soldered circuit. The circuit(PCB prototype) has gone through the bring-up and hardware-in-the-loop testing. Additionally, I debugged the code using JTAG connection via OpenOCD with GDB. The circuitry enables reliable connections between peripherals and the computer, limits pick-up mechanism extraction by utilizing a DC-encoder, as well as debounces GPIO pins using an RC-based low-pass filter.